Germany Is Building the Biometric Dragnet the EU Explicitly Banned

Key Takeaways

- Germany's Ministry of Justice wants police to match crime footage against all publicly available images on the internet using biometric AI

- Experts confirm this is technically impossible without first building a comprehensive biometric database of every face on the public internet

- EU AI Act Article 5(1)(e), in force since February 2025, explicitly bans building facial recognition databases through untargeted internet scraping, with no law enforcement exception

- Germany's own Federal Constitutional Court ruled in 2023 that automated dragnet data analysis without sufficient legal thresholds violates the constitutional right to informational self-determination

- Civil rights organizations, including AlgorithmWatch and the Gesellschaft für Freiheitsrechte have called the draft law not fixable by amendment, only withdrawable

Germany's Latest "Safety" Tool Is a Mass Surveillance Blueprint

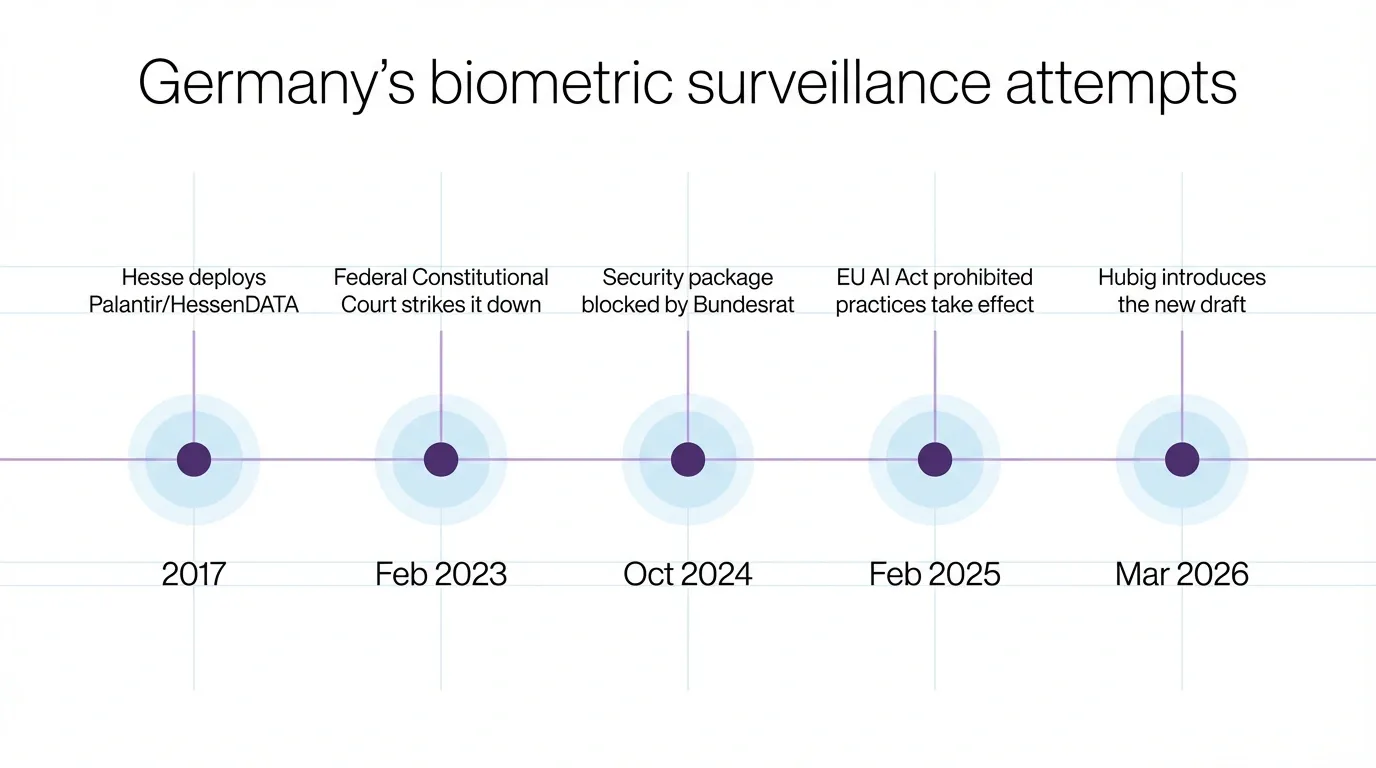

Governments across Europe have spent years dressing surveillance infrastructure in the language of safety, and Germany is not a newcomer to that pattern. Its authorities have tested live facial recognition at train stations, deployed biometric identification at border crossings without informing data protection authorities, and watched the constitutional court draw limits that the next legislative session promptly attempts to architect around.

In March 2026, Justice Minister Stefanie Hubig introduced a draft bill amending the Code of Criminal Procedure, proposing two new investigative tools for law enforcement. The first is automated biometric matching of crime footage against all publicly available images on the internet; the second is AI-assisted cross-database analysis linking previously siloed police records. The ministry describes both as "modern investigative tools." Experts describe them as a blueprint for mass surveillance infrastructure that would end anonymity in public digital spaces.

The Database the Ministry Claims It Won't Build

The ministry's official position is careful. Paragraph 98d of the draft specifies that only "existing data" would be searched, with no new super-database being created, real-time webcam matching excluded, and a prosecutor's express order required per case.

Kilian Vieth-Ditlmann of AlgorithmWatch, a European digital rights organization, calls those restrictions a farce. Automated matching of millions of web images in fractions of a second is technically impossible without first creating a structured, searchable database of all available faces. An expert report commissioned by AlgorithmWatch confirms that a systematic biometric match without a pre-processed database is not feasibly achievable at any useful scale.

The Bundestag's own Scientific Services reached essentially the same conclusion. The ministry's framing of the tool as a "digital acceleration of manual visual inspection" is, in AlgorithmWatch's words, a dangerous euphemism for building infrastructure that requires biometrically inventorying the entire public internet, including vacation photos, protest footage, background faces in social media posts, and anyone who has ever appeared in a publicly accessible image.

The ministry knows this. It developed the draft simultaneously with Interior Minister Alexander Dobrindt's parallel proposal extending identical biometric powers to the BKA and Federal Police, a coordinated federal package rather than a preliminary exploration submitted together with a shared April 2 deadline for civil society responses before the cabinet vote.

The Law That Already Said No

The EU AI Act came into full effect on its prohibited practices provisions in February 2025. Article 5(1)(e) of the regulation bans AI systems that create or expand facial recognition databases through the untargeted scraping of facial images from the internet or CCTV footage. The prohibition is absolute, and, unlike the regulation's provisions on real-time remote biometric identification in public spaces, which include narrow law enforcement carve-outs, this particular ban has no exceptions for law enforcement purposes.

The Bundestag Scientific Services analysis introduces a potential loophole the ministry may be eyeing. The AI Act prohibition applies specifically to AI-based database creation, meaning that if the matching could be achieved without AI-based scraping, or if the work were outsourced to a private provider, the regulation's direct prohibition might not apply on its face. The draft bill explicitly permits transmission of data to private providers both within and outside the EU for the purpose of biometric matching, which AlgorithmWatch calls an outsourcing permission that renders all of the bill's stated safeguards a theoretical facade. A system that can export your biometric data to a non-EU provider to do the matching, then delete its local copy, is not a targeted investigative tool but a liability transfer mechanism.

The second component of the bill, the AI-assisted cross-database analysis under Paragraph 98e, faces a problem that predates EU AI Act concerns entirely. In February 2023, Germany's Federal Constitutional Court ruled that provisions in Hesse and Hamburg authorizing automated police data analysis without sufficient interference thresholds were unconstitutional. The court found that automated data analysis constitutes a separate, serious interference with the right to informational self-determination and that the laws allowed police to create comprehensive profiles of persons and groups "with just one click," without distinguishing suspects from witnesses, victims, or unrelated bystanders.

The new draft proposes doing this at the federal level, covering all police databases nationally, with Hubig promising that "assessments and decisions" will continue to be made solely by investigators. The court that already struck down a narrower version of the same power is unlikely to find that framing persuasive.

Everyone Is in the Database

The practical scope of the biometric matching proposal is not limited to people suspected of anything. It covers anyone whose face appears anywhere on the public internet. That is, as AlgorithmWatch observes, the majority of the population.

Images uploaded from demonstrations, political party events, Pride marches, union meetings, and religious gatherings are all fair game. Those images carry inferences about political opinion, party membership, sexual orientation, and religious belief, all of which are protected categories under EU and German constitutional law.

Yet, I'd say the most chilling effect here is not a side effect of the infrastructure being built. Once any government can retroactively identify everyone who attended a protest or a union rally by cross-referencing footage against online photos, the question of whether they will do so becomes secondary to the fact that people will know they can. That knowledge alone is enough to reshape public behavior, and that reshaping is not incidental. It is, for certain political actors, the point.

The bill's threshold for authorizing matching, "considerable importance" of criminal offenses, is considered very broad in legal circles. The offense catalog cited, Section 100a of the Code of Criminal Procedure, has been expanded repeatedly and has previously justified surveillance powers that courts later constrained. It is not a narrow gate.

A Pattern Built One Failure at a Time

Germany's security apparatus has been pushing for expanded biometric powers through successive legislative vehicles, each time facing legal constraint and returning with a revised approach. The 2024 security package included similar data-analysis powers, where the Bundesrat blocked the police-powers element while passing the migration-related measures. The new conservative-led coalition is bringing the same powers back in a coordinated federal package covering both investigative and preventive policing nationwide.

Saxon police, meanwhile, deployed a live facial recognition system called PerIS at the Polish border without informing the data protection authority. The authority later found it likely unconstitutional, but the system had already operated.

The EFF documented in 2024 that Germany's law enforcement had been running biometric tools at the edge of legality for years, with legal legitimization always the next objective. But the thing is that once the infrastructure is legally enshrined and technically installed, the appetite for broader use follows, and that is not a prediction but the consistent pattern across every surveillance capability Germany's federal and state police have acquired.

The One Answer Left

AlgorithmWatch's formal submission on the draft bill, and the parallel assessment by the Gesellschaft für Freiheitsrechte, arrive at the same conclusion through independent legal analysis. The constitutional and human rights disproportionality of the biometric matching proposal is not a drafting problem that amendments can correct. The only proportionate response is to withdraw the draft entirely and replace it with a comprehensive statutory ban on biometric mass recognition systems for both public and private entities.

That is a remarkable thing to say about a government's own legislation, where the argument is not "change this clause" but "the premise is wrong." The civil society consensus, backed by the Federal Constitutional Court's 2023 precedent and by binding EU law in force since February 2025, is that no version of this proposal can be made legal and proportionate. The ministry is not unaware of these constraints. It has simply decided to proceed.

I find it genuinely difficult to be charitable about what that decision signals. Governments that build surveillance infrastructure in the face of explicit constitutional rulings and binding EU prohibitions are not engaged in good-faith security policymaking. They are engaged in a bet that the legal process will move slower than the installation schedule. And if the Federal Constitutional Court has to strike this down too, the tools will have already been tested, the protocols established, and the next version will arrive with the lessons learned.

That is how you normalize what you cannot legalize, and Germany is very much in the middle of trying.

Be part of the resistance, quietly.

Get Mysterium VPN

Dominykas is a technical writer with a mission to bring you information that will help you in keeping your digital privacy and security protected at all times. If there's knowledge that can help keep you safe online, Dominykas will be there to cover it.

.png)