OpenAI's Questionable Pentagon Deal Just Cost the Company Its Robotics Chief

The people most qualified to spot when a technology is being pointed in the wrong direction are usually the ones building it. They know what the systems can do, they understand the gap between a company's stated values and its actual commitments, and they are close enough to the decision-making to know when a process has been skipped. Most of them stay quiet. The ones who don't tend to do it at significant personal cost, which is precisely what makes it worth paying attention to when they speak.

On March 7, 2026, Caitlin Kalinowski, OpenAI's head of robotics and consumer hardware, announced her resignation on X, citing the company's newly formed agreement with the U.S. Department of Defense (or, more recently, Department of War). As per her own words, "Surveillance of Americans without judicial oversight and lethal autonomy without human authorization were lines that deserved more deliberation than they got.”

Signing First, Thinking Later

OpenAI's agreement with the Pentagon grants its AI models access to secure, classified Defense Department computing systems. The deal is part of a broader government push to embed advanced AI into national security infrastructure, and it moved fast enough that senior people inside the company were caught off guard by its scope.

Kalinowski said publicly that policy guardrails around certain AI uses were not sufficiently defined before the announcement landed. That is not a minor process complaint. When the technology in question can be used to surveil civilians or operate weapons without human sign-off, deciding the rules after deployment seems much less like an oversight and a whole lot more like a choice.

The agreement came together in the wake of collapsed negotiations between the Pentagon and Anthropic, which had pushed for strict contractual limits on domestic surveillance and autonomous weapons. The Defense Department, apparently uninterested in those limits, designated Anthropic a supply-chain risk and turned to OpenAI instead. OpenAI said yes without the same conditions attached.

One Person Who Actually Drew a Line

Kalinowski joined OpenAI in November 2024, coming from Meta, where she led the team that built Orion, the company's augmented reality glasses. At OpenAI she built out the robotics organization, hiring for physical AI systems and infrastructure. She was, by any measure, invested in the work, and her resignation was not impulsive.

In her post, she was careful to separate her objection from any personal grievance. She said the decision was about principle, not people, and that she had deep respect for CEO Sam Altman and the team.

What she could not get past was the specific combination of capabilities the deal opens up: AI on classified networks, with surveillance applications and autonomous weapons potential in scope, and no hard contractual guardrails locking those uses out before the ink dried. Someone who spends their days building systems that interact with the physical world understood exactly what "insufficient deliberation" on those questions actually means in practice.

Promises Without Enforcement Mechanisms Are Just Good PR

OpenAI responded to Kalinowski's resignation with a statement confirming the departure and asserting that the Pentagon agreement creates a workable path for responsible national security uses of AI while making clear its red lines: no mass domestic surveillance, no autonomous weapons, and no high-stakes automated decisions. Sam Altman separately said he would amend the deal to prohibit spying on Americans.

And yet, while this might sound reassuring at first, it doesn’t take long to realize that these are just vague promises with absolutely no guarantees. The history of government surveillance is a long record of authorities finding that the rules they agreed to in public do not apply in the specific operational context they are currently in.

OpenAI's assurances do not come with an enforcement mechanism, an independent audit requirement, or a consequence for violation. They come with a spokesperson quote. That is not nothing, but it is also not a guardrail. Kalinowski knew that, and that’s exactly why she left.

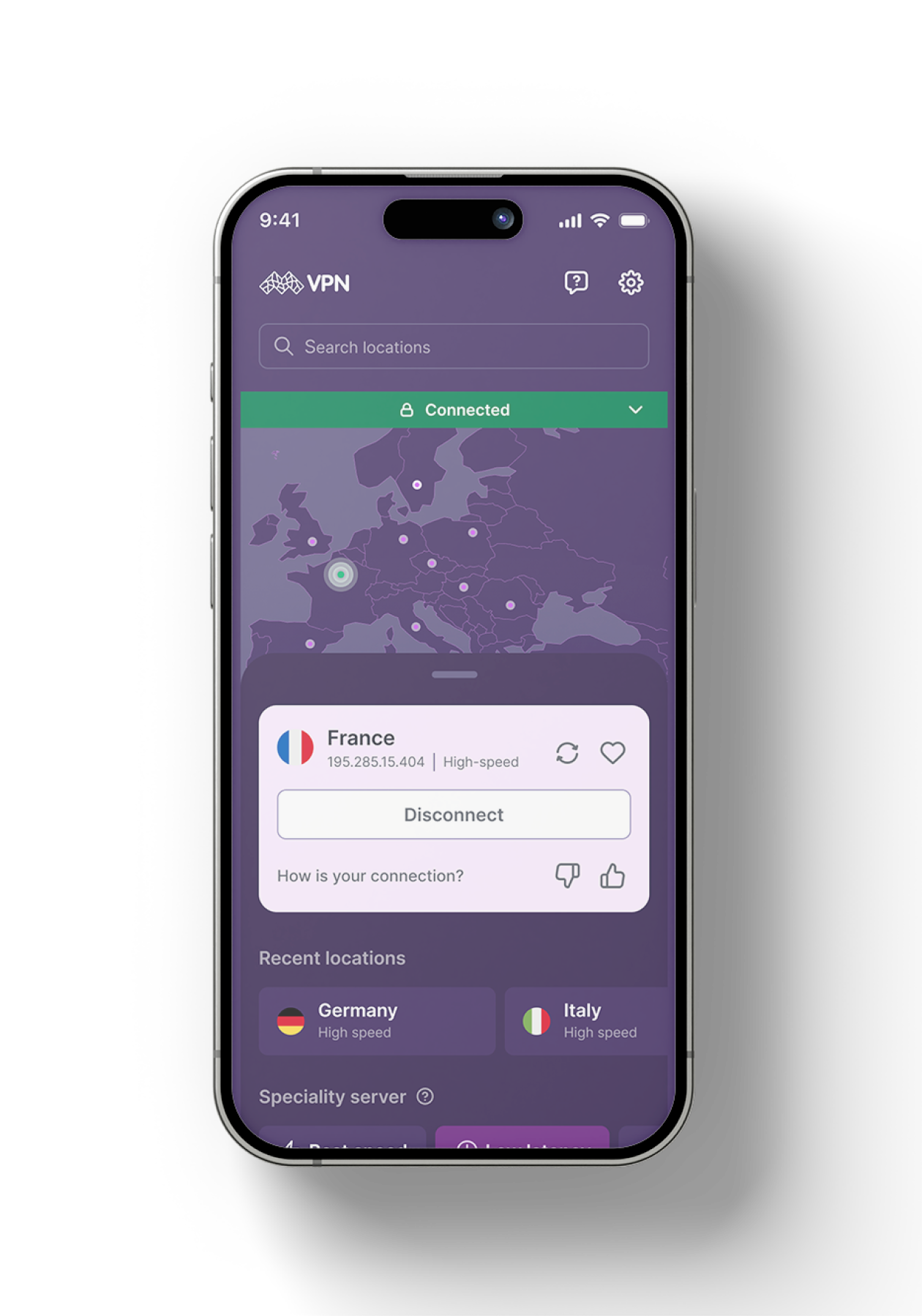

Be part of the resistance, quietly.

Get Mysterium VPN

Dominykas is a technical writer with a mission to bring you information that will help you in keeping your digital privacy and security protected at all times. If there's knowledge that can help keep you safe online, Dominykas will be there to cover it.

.png)