South Korea Just Implemented the First AI Law That Doesn’t Suck

I doubt it could be called a “hot take” that most of the digital safety laws implemented by various governments over the past few years have been absolutely terrible, to say the least. They’re all screaming, “Think of the children,” and then either stay completely useless or instead function as mass surveillance tools. And yet, today, we actually have some seriously good news!

On January 22, 2026, something positively weird happened. South Korea passed an AI law that doesn't make me want to throw my laptop out the window. The Basic Act on the Development of Artificial Intelligence and Establishment of a Foundation for Trustworthiness (yes, that’s a real name) just became the world's first comprehensive AI law requiring companies to actually label AI-generated content.

For once, a piece of digital legislation might genuinely help combat the tsunami of AI-generated garbage flooding the internet instead of just giving governments more power to watch what we're doing. This might just be a much-needed step in the right direction.

An AI Law That Finally Makes Sense

Let’s cut straight to the point. Under Article 31 of the law, AI companies located in or providing services to South Korea have to notify users in advance when they're using high-impact or generative AI systems. More importantly, when AI-generated content, especially deepfakes or synthetic media that's tough to tell from reality, gets pushed out into the world, it has to be clearly labeled as AI-generated.

We're talking about virtual sounds, images, and videos that could easily pass as real. Companies have to mark them in ways that let users clearly recognize what they're looking at. The law does give some flexibility for artistic and creative work, so filmmakers and artists aren't forced to slap ugly disclaimers over their projects, but the core requirement stands. If it's AI and it could fool someone, it needs a label.

The penalties? Up to 30 million won, which is roughly $20,400. It sure could be higher, but it’s not exactly pocket change either, and it will hopefully be enough to make companies actually comply instead of ignoring the rules like they do with half the privacy regulations out there.

This law isn't about forcing you to verify your identity, breaking encryption, or outright banning stuff. It's about transparency. It's about giving people the information they need to make their own decisions about what content to trust. That's it, and this approach is genuinely great.

The Nuance Shows Someone Actually Thought This Through

What impresses me most about this law is that someone clearly put actual thought into how it works. It's not just a blanket "regulate everything" approach that ends up breaking more than it fixes.

First, there's a full-year grace period before companies face any penalties. That gives AI developers and platforms time to build the infrastructure they need to comply instead of scrambling at the last second or just ignoring the rules entirely because they're impossible to implement quickly.

Second, the law specifically targets high-risk AI systems, like the stuff used in healthcare, finance, transportation, and public services where the stakes are genuinely high. It's not trying to regulate every single AI tool under the sun. There's actual focus here.

Third, the law explicitly includes exemptions for artistic and creative work. Personal views of AI use in art might differ for people, but labels can be applied in ways that don't wreck the viewing experience. A filmmaker doesn't have to plaster "THIS IS AI" across every frame of a movie that uses AI-generated effects. The law recognizes that context matters.

South Korea's also establishing a National AI Committee to oversee implementation and provide balanced oversight. Will it work perfectly? Probably not. Committees never do. But at least there's a governance structure in place to handle edge cases and adjust as the technology evolves.

The government's also supporting AI development through the same law. It’s backing data centers, training data projects, and innovation clusters, all the while requiring transparency. It's not an anti-AI law pretending to be pro-safety but something genuinely trying to foster innovation while building trust.

Why Transparency Actually Matters Right Now

We have all been watching the misinformation problem worsen every year. Deepfakes used to be this sci-fi concept that only tech nerds worried about, but these days, things are seriously getting out of hand.

The numbers are genuinely terrifying. We're looking at an estimated 8 million deepfakes being shared in 2025, up from just 500,000 in 2023. That’s right, we're talking about a sixteen-fold increase in two years. And to make it even better, some projections suggest that in 2026, around 90% of online content could be generated synthetically.

The line between reality and fiction has blurred so much, it’s practically gone. Telling what’s what has become so complicated that only about 9% of adults feel confident in their ability to identify deepfakes. In other words, the vast majority of us are walking around the internet completely vulnerable to being fooled by synthetic content, and we don't even realize it.

The real-world impact isn't some abstract concept either. We've seen deepfakes used in election interference attempts, massive fraud schemes, celebrity scams, and non-consensual content that destroys people's lives.

And clearly, it’s not just me who has such an opinion, because 84% of people familiar with generative AI think content created with it should always be clearly labeled. People want this. They're not asking for censorship or bans. They just want to know what they're looking at so they can make informed decisions.

That's why South Korea's approach matters. Labeling isn't censorship. It doesn't stop anyone from creating or sharing content. It just adds context. It gives users the power to evaluate what they're seeing instead of being left in the dark. And with the extremely tense state our world is currently in, the importance of this cannot be overstated.

Could This Catch On Like All the Useless Laws Do?

South Korea just became the second place in the world, after the European Union, to pass comprehensive AI legislation, and the first to put it into effect. The EU's already got similar transparency requirements in Article 50 of their AI Act, which mandates marking and labeling of AI-generated content in machine-readable formats, and those rules are set to fully apply by August 2026.

The United States is moving slower, but there's movement, too. California's considering AB-3211, which would require device makers to attach provenance metadata to photos and force online platforms to disclose that metadata. Some states have already criminalized certain types of deepfakes. The Senate is looking at federal legislation requiring digital watermarks on AI-generated content. It's messy, but it's happening.

However, what matters the most is that South Korea's approach is fundamentally different from what we've seen in places like China, which implemented deepfake regulations back in 2023 but wrapped them in a censorship-first, security-heavy model that's more about control than transparency.

South Korea's law isn't about control. It's about giving people information. That distinction matters because it sets a precedent for how democracies can regulate AI without sliding into surveillance states.

For the global internet, this could be huge. If more countries adopt similar transparency-focused approaches, we might actually see a shift in how AI companies operate worldwide. Companies don't want to maintain different systems for different markets. If enough major economies require labeling, it becomes easier to just label everything globally instead of geofencing features.

Of course, I'm cautiously optimistic about this because we've been drowning in bad digital laws for years now. Every time a government announces new internet regulations that are just some combination of surveillance expansion, data collection mandates, or restrictions that make the internet worse while claiming to make it safer, everyone is taking notes. Yet, when we get something that actually makes sense, they can’t seem to find their pens.

But in reality, this is the kind of regulation we should be pushing for. It won’t solve the misinformation crisis on its own, but it's a much-needed step in the right direction, and hopefully, it catches on. If other countries follow South Korea's lead instead of copying Australia's surveillance playbook or doing nothing at all, we might actually have hope for reviving the internet. And that would be genuinely worth celebrating.

Be part of the resistance, quietly.

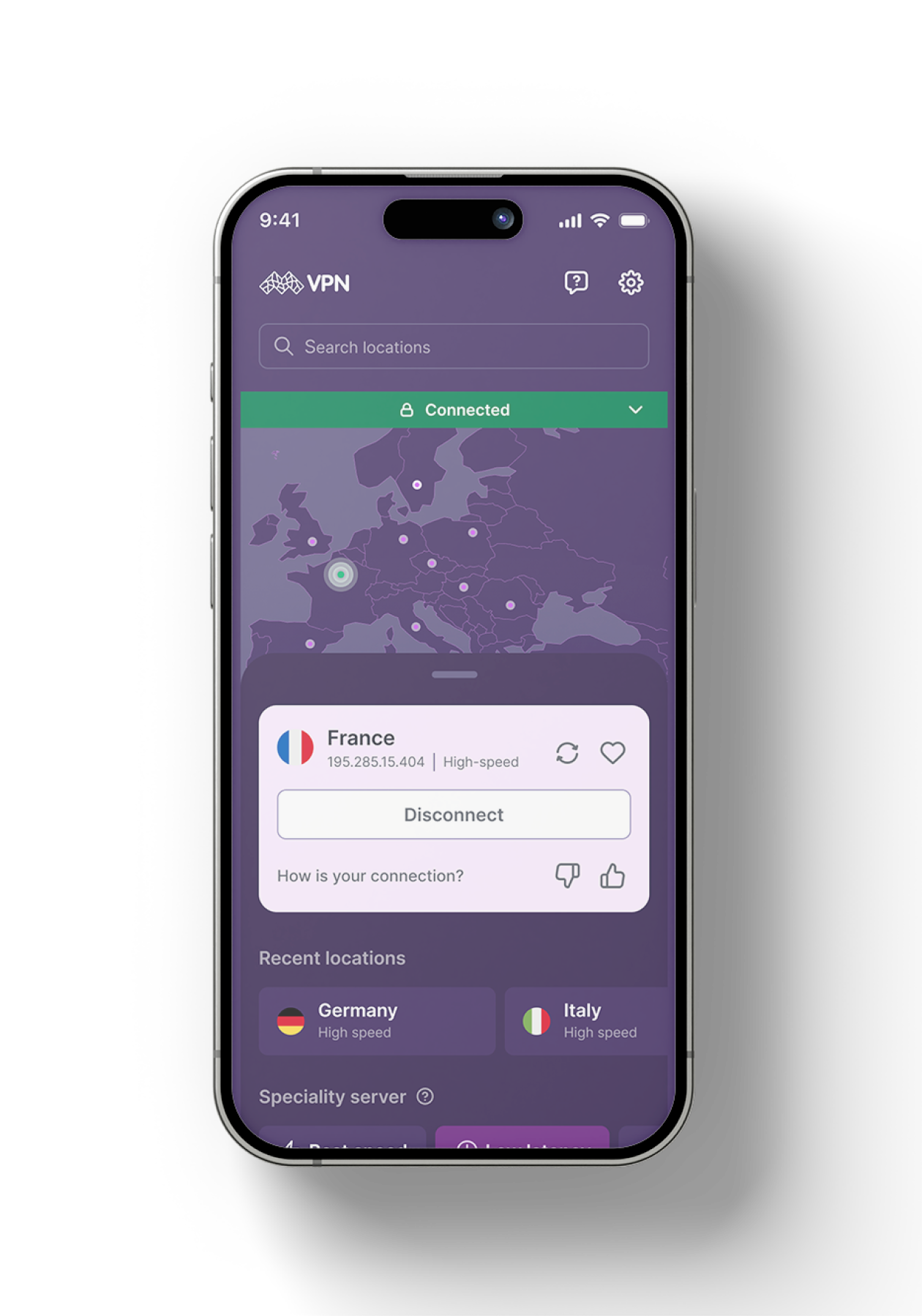

Get Mysterium VPN

Dominykas is a technical writer with a mission to bring you information that will help you in keeping your digital privacy and security protected at all times. If there's knowledge that can help keep you safe online, Dominykas will be there to cover it.

.png)